Deterministic storage: the overlooked half of physical AI reliability

Physical AI teams treat networking determinism as a first-class engineering problem. Storage determinism rarely gets the same scrutiny, and that...

We are here to help

Have a question or need guidance? Whether you’re searching for resources or want to connect with an expert, we’ve got you covered. Use the search bar on the right to find what you need.

If AI systems keep getting more intelligent, what ultimately determines whether they can operate reliably in the real world?

That question is worth sitting with. AI’s transition from software into machines is already well documented, as is the infrastructure gap it creates. What’s less examined is what happens next, once that gap starts to close: the moment physical AI industrializes, the constraints change entirely.

For the past several years, AI progress has been measured by models: larger architectures, more training data, better benchmark scores. That focus made sense when the primary challenge was building intelligence.

But as AI moves into vehicles, robotics platforms, medical devices, and industrial systems, the challenge changes. Physical AI does not operate through models in isolation. It operates through systems: tightly integrated stacks that combine sensors, compute, storage, control logic, safety mechanisms, and lifecycle management. The model sits within this architecture, and depends on everything around it to function reliably.

Once intelligence becomes embedded in machines that interact with the physical world, the broader system becomes just as important as the model itself.

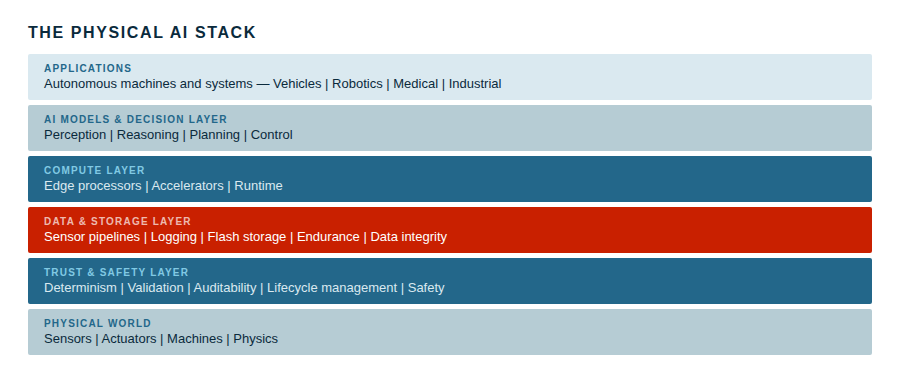

A useful way to think about this is to consider the full architecture that intelligent machines actually require.

At the foundation sits the physical world: sensors, actuators, and machines operating in real environments governed by physics. Directly above that comes a trust and safety layer responsible for determinism, validation, and predictable behavior under real-world conditions. This is where data integrity is enforced and where critical operations must behave consistently even during unexpected events under power loss, environmental stress, and edge-case inputs.

Above the safety layer is the data management layer, handling the sensor pipelines, telemetry logging, and long-term data integrity that machines continuously generate at scale — in some deployments, across flash lifespans measured in decades. This feeds into the compute layer, which provides the runtime infrastructure for real-time inference, typically at the edge where machines actually operate.

Only then do the AI models themselves enter the picture, providing perception, reasoning, planning, and decision-making. The applications that deliver functionality through autonomous machines and intelligent systems are at the top of the stack.

Most AI discussions concentrate almost exclusively on the model layer. Physical AI forces us to think about the entire stack.

The distinction matters most once systems leave the controlled environment of the data center. In digital environments, failures are often containable — services restart, workloads shift, systems recover. In physical environments, the tolerances are narrower.

Robotics platforms must continuously interpret sensor streams without interruption. Vehicles must respond to events within milliseconds. Industrial machines must operate under sustained environmental stress while maintaining traceable operational histories. Many of these systems remain deployed for years, sometimes decades. Reliability over time is not a design aspiration; it is a baseline requirement.

Under these conditions, the engineering challenge is no longer primarily about building more capable models. It becomes about building systems that allow those models to operate dependably within real-world constraints, and over real deployment timescales.

Industries that have long built cyber-physical systems understand this instinctively. Aerospace, automotive, and industrial automation have always treated reliability as a property of the entire system, not of any single component. Sensors must produce trustworthy input. Compute must meet strict latency requirements. Storage must preserve data integrity across power interruptions. Systems deployed in the field must be updatable, including their software, without compromising the stability of a machine that may be operating in a safety-critical context.

AI models bring powerful reasoning into these environments. But they do not replace the engineering discipline required to make systems dependable. They add to it.

The models train intelligence; the systems engineer reliability.

Future AI development will depend on more than just models. As AI becomes embodied in machines, the physical AI data layer beneath every intelligent machine, deterministic storage, resilient data handling, and certifiable update paths, moves from background infrastructure into the critical path.

Those who engineer reliability into the systems where intelligence actually has to operate will build the future of AI.

Get in touch with us and be part of the ongoing discussionThe infrastructure gap holding physical AI back

Why physical AI industrialization changes everything beneath the model

The physical AI data layer: where reliability becomes economics

Suggested content for: